Concept Development and Use of an Automated Food Intake and Eating Behavior Assessment Method

Summary

This protocol shows and explains a new technology-based dietary assessment method. The method consists of a dining tray with multiple built-in weighing scales and a video camera. The device is unique in the sense that it incorporates automated measures of food and drink intake and eating behavior over the course of a meal.

Abstract

The vast majority of dietary and eating behavior assessment methods are based on self-reports. They are burdensome and also prone to measurement errors. Recent technological innovations allow for the development of more accurate and precise dietary and eating behavior assessment tools that require less effort for both the user and the researcher. Therefore, a new sensor-based device to assess food intake and eating behavior was developed. The device is a regular dining tray equipped with a video camera and three separate built-in weighing stations. The weighing stations measure the weight of the bowl, plate, and drinking cup continuously over the course of a meal. The video camera positioned to the face records eating behavior characteristics (chews, bites), which are analyzed using artificial intelligence (AI)-based automatic facial expression software. The tray weight and the video data are transported at real-time to a personal computer (PC) using a wireless receiver. The outcomes of interest, such as the amount eaten, eating rate and bite size, can be calculated by subtracting the data of these measures at the timepoints of interest. The information obtained by the current version of the tray can be used for research purposes, an upgraded version of the device would also facilitate the provision of more personalized advice on dietary intake and eating behavior. Contrary to the conventional dietary assessment methods, this dietary assessment device measures food intake directly within a meal and is not dependent on memory or the portion size estimation. Ultimately, this device is therefore suited for daily main meal food intake and eating behavior measures. In the future, this technology based dietary assessment method can be linked to health applications or smart watches to obtain a complete overview of exercise, energy intake, and eating behavior.

Introduction

In nutrition research and dietary practice, it is key to have good measures of what, how much, and how people eat, to find solutions to the overweight and obesity problems. To assess dietary intake, often conventional self-report questionnaires are used such as food diaries, 24 h recalls or food frequency questionnaires1. These methods rely on self-report and are therefore time-consuming and prone to bias due to social-desirable answers, memory inadequacy, and difficulties in estimating portion sizes2,3. In addition to measures of the diet quality (food type and amount eaten), it is also important to know how the food is eaten, as eating behaviors that slow down food intake have been shown to prevent overconsumption within a meal4. To assess eating behavior the golden standard is to have two observers annotate video recordings of people eating a meal5. This method is rather labor intensive and time consuming and does not allow for immediate feedback on the behavior.

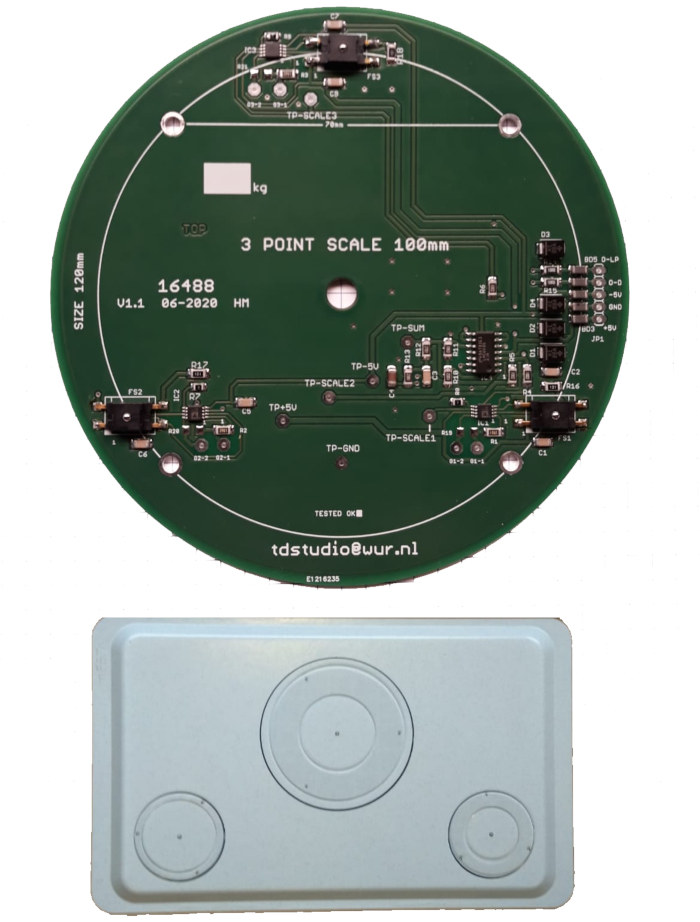

Recent technological advances now provide the opportunity to combine automated measures of food intake with automated measures of the eating behavior over the course of a meal. In response to these developments, a new sensor-based dietary assessment method was developed, called the mEETr, the acronym of the two Dutch words 'Meter' (translated: measuring device), and 'eet' (translated: to eat). The mEETr is a regular dining tray with three built-in weighing stations (Figure 1 demonstrates the design of the tray and the sensor plates) and a camera holder. Each weighing station consists of three triangularly positioned measurement points to distribute the weight. The weighing stations measure the weight of the bowl, plate, and drinking cup or glass continuously over the meal. The mEETr also includes a video-camera holder. Currently, the camera holder is separate from the tray, but for standardization purposes an integrated camera after the next upgrade of mEETr (a folding video camera stick) would be ideal. The camera facilitates automated real-time analysis of the number of bites and chews, and eating duration, which allows for the generation of information on the eating rate and the bite size. Automated analysis of eating behavior is done with the use of a newly developed algorithm. Various research groups have developed devices to provide people real-time feedback on the acceleration of eating and the quantity people eat6. Also, augmented forks have been developed to provide real-time feedback on the number of bites and their frequency within a meal7. Additionally, an ear sensor was developed to measure the microstructure of eating in free living conditions8,9. Similar to this device is the set-up used by Ioakimidis et al.10, where video measures were combined with a weighing plate to determine the food intake, number of bites, and chewing behavior.

Compared to these devices the novelty of the mEETr is that it combines automated measures of food intake of two plates and a drinking cup (n = 3) and eating behavior (e.g., eating rate, number of bites, bite size, and chewing behavior) in one device. The mEETr, as demonstrated, is suited for within meal measures of food intake and eating behavior within a controlled (eating lab) environment, but eventually the aim is to use the mEETr in less controlled environments where re-occurring meal plans are used such as daycares, elderly-homes, and hospitals.

Ultimately, the mEETr will provide a more objective, and as such, more accurate and precise measure of food intake and eating behavior than conventional dietary assessment methods and manual coding of videos. Better measures of the food intake would benefit nutrition and health research, but also the health professionals in their challenge to combat the increase in food-related non-communicable diseases11. Ultimately the mEETr can be used in research and health-care settings as well as by health-conscious users at home by linking the mEETr to existing technologies and software, such as other health apps or smart watches. Overall, these health measures provide the user or the health-care professional with a rather diverse and complete overview of a variety of health-behavior patterns (e.g., food intake, eating behavior, energy expenditure based on real-life measures, sleep, stress) enabling the user to optimize their diet and create a healthy lifestyle.

Protocol

This pilot study was approved by the METC of Wageningen University prior to starting the project.

CAUTION: All the participants contributing to this project provided an informed consent, including the approval of video images showing visible and recognizable faces.

1. Sample preparation and participant consent

- Prepare a juice (glass or cup), fruit yoghurt (bowl), and fruit pieces (plate).

NOTE: These foods are selected for demonstration purposes only (Figure 2). - Recruit a participant or a volunteer who agrees to participate in the study.

- Exclude the participants wearing glasses (who cannot use contact lenses) and/or having facial hair (beard or mustache) to avoid measurement errors.

- Inform the participants about the study and the data collection (data storage, accessibility). Obtain separate permissions in case of non-anonymous video recordings. Get the signature of the participant on the informed consent before collecting data.

2. Device and measurement location set-up

NOTE: This protocol is suited for data collection in a controlled (eating laboratory) setting.

- Make sure that the light in the room is evenly distributed-avoid shadows on participants' faces.

- Avoid the background noise on the video recordings due to the presence of individuals other than the participant.

- Seat the participant on a chair before a table; with tabletop located just below the participant's chest.

- Connect the wireless receiver of the tray and the webcam to a laptop.

- Start up the laptop. Ensure that the laptop has the following specifications: CPU i7-10750H, SSD M.2 512 GB, Memory 1x 16 GB, DDR4 2933 MHz non-ECC-memory, Operating system 64 bit.

- Switch on the tray and ensure that there is charge in the tray (green light).

- Open the connector program (dos), the receiver, and the processor software program together with the dashboard, respectively.

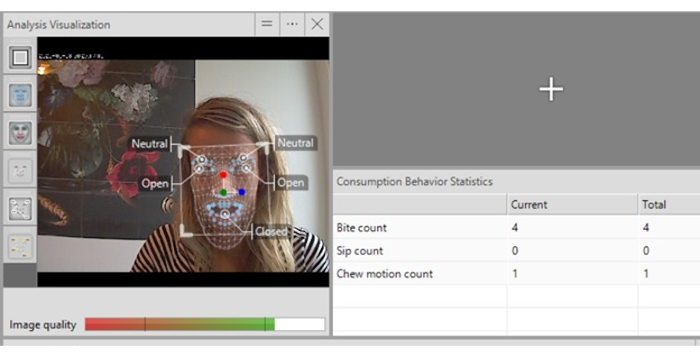

- Check the incoming image quality in the processor program (Figure 3).

NOTE: To detect the eating behavior, the image quality should be within the last quarter of the task bar (green); as close to 100% green as possible. Shadow forming may lead to low image quality. - Make sure the image frame is correct to prevent poor image quality. Ensure that the participant's head (above the cranium) till chest, including arms and shoulders are clearly visible.

3. Weighing system and data transport

- Validate the measures prior to the use of the mEETr for the first time.

NOTE: The mEETr device consists of a regular commercially available dining tray (fiber enforced epoxy dinner tray) with three built-in weighing stations (Figure 2). - To validate the set-up, ensure that the tray continuously measures the weight of a plate, bowl, and drinking glass.

NOTE: The precision of the weighing scale across the whole range should be 0.3%. - Do not place too much weight on each weighing platform. The maximum weight for the largest platform (dinner plate) is 1.5 kg. The maximum weight of the two smaller weighing platforms (bowl and glass) is 800 g. The minimum weight that can be accurately measured is 1 g for each weighing station.

- Make sure that the plate, the cup, and the bowl are not resting on the platform or the surrounding tray. Make use of the center ring to avoid this.

NOTE: Each weighing station consists of three triangle positioned force sensors that act together as one scale. A triangle position was chosen to balance weight. - Make sure to keep the tray dry. The tray includes a 50 mm thin base panel (central circuit board) below the tray that contains the electronics.

- For data transfer, make sure that the tray connects to a wireless receiver.

NOTE: Transfer the weighing data at a 1 s interval via a short-range radio signal (about 1 m distance). Connect the receiver to a personal computer (PC) via an USB port. - The three force sensors measure the forces (or weights), sum them up, and convert them to a calibrated weight value.

- Recharge the tray after each use.

NOTE: The tray is powered by an internal battery pack and can be charged with a USB charger. An on/off slide switch is located near to the USB socket. A full battery charge provides for about 20 h of use. - Do not clean the tray in a dishwasher; the tray is not dishwasher proof. Clean the tray using a cleaning spray. Ensure that the tray is kept clean and dry. Leak channels along the platforms drain liquid spills.

4. Participant explanations and start of observation

- Place the mEETr in front of the participant.

- Instruct the participant to 1) eat as much or as little as he/she wants, 2) look straight into the webcam while eating, and 3) do not put hands in front of the face while eating.

- Start a new observation in the receiver software. Log the date, participant number, participant's gender, age, and anthropometric data, such as weight and height. Include additional information such as the study condition and the study visit in the observation name.

- Press Record in the receiver software to record the observation.

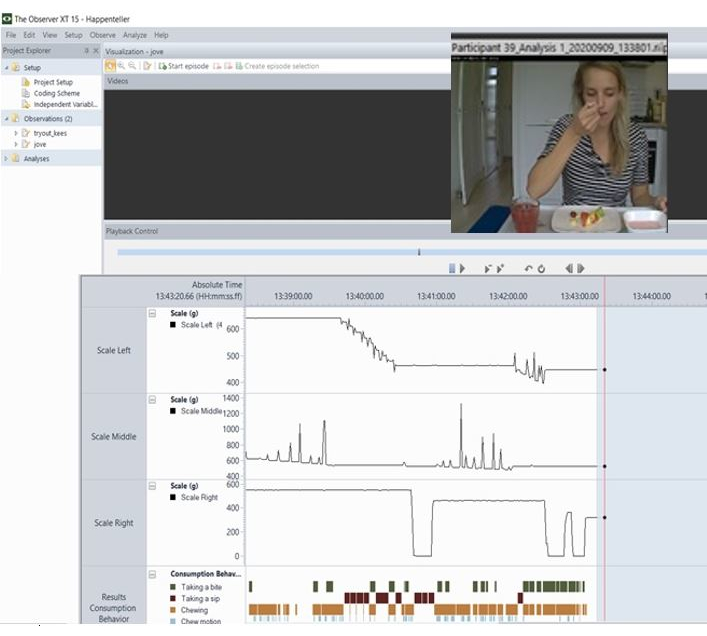

- Activate the dashboard in order to check the video recordings and the incoming data during data collection (Figure 4).

- Prior to the recording, ask the participant to 1) raise the card with the participant number, and 2) raise their hand at the start and end of the meal.

- End the observation when the participant finishes eating. It takes 2 min to transfer all the data to a spreadsheet.

- This is the end of the session for the participant.

- Disconnect the webcam and the tray-receiver from the laptop and clean it with a cleaning tissue or cleaning spray.

5. Evaluation and transfer of data

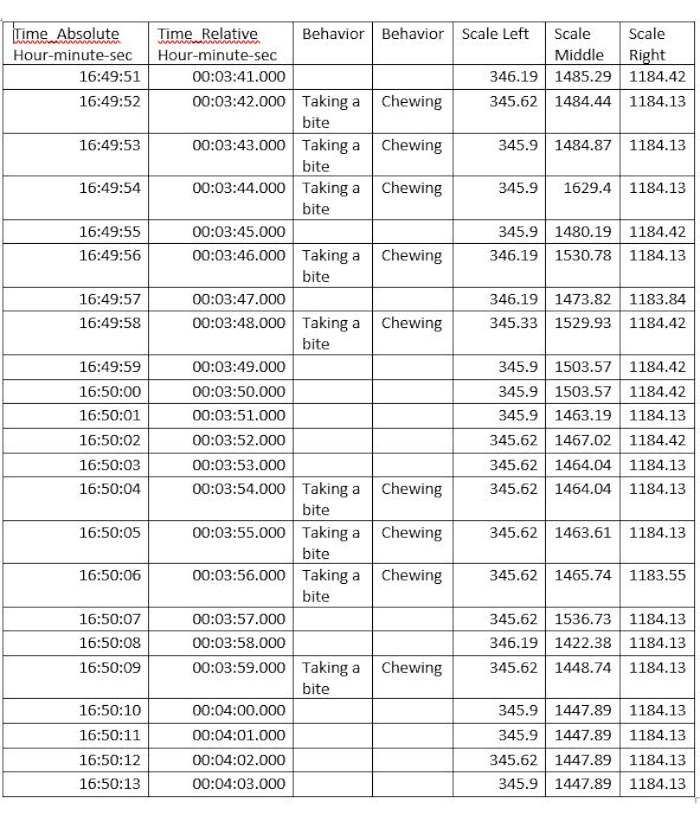

- Open the last observation in the receiver software. Automated measures of eating behavior are stored under the heading Data. Click on Export Data to extract the raw data. The subsequent output file contains data on the participant number, real time, relative (to start) time, and eating behavior variables (number of bites, number of chews, chewing duration) per test participant.

NOTE: All behaviors are time stamped. In the receiver software, additional external data of the tray can be extracted, for example, the data on the weight of each of the three trays. The data is recorded 10 times per second and transferred. The tray data collection time is synchronized with the eating behavior recordings. - Summarize and visualize the results in different bar charts within the program itself. Export the results as raw data in log files (.xsl) (Figure 5).

- Export the log files to a spreadsheet and perform the data analysis using the statistical program of preference.

- Clean the data before data analysis.

NOTE: Due to the distortions of pressing with cutlery on the plates (causing an increase in weight), weighing data of the tray needs to be cleaned to a Kaplan Meier curve with step size indicating bite size, the step length indicating time between bites. The beginning of the curve indicates start-weight, the last step indicates end-weight) as follows.- Smoothen over the timepoints to measurements per second to filter out extreme values.

- Set 5 g boundary, detect weight plateaus (i.e., no change within +/- 5 g), and weight changes (changes over time larger than 5 g) to indicate bite sizes and portion changes.

- Exclude weight increase due to cutlery remaining on the plate.

NOTE: The output is the total weight changes per weight station begin and end of meal (= meal size), average bite size, and bites per min.

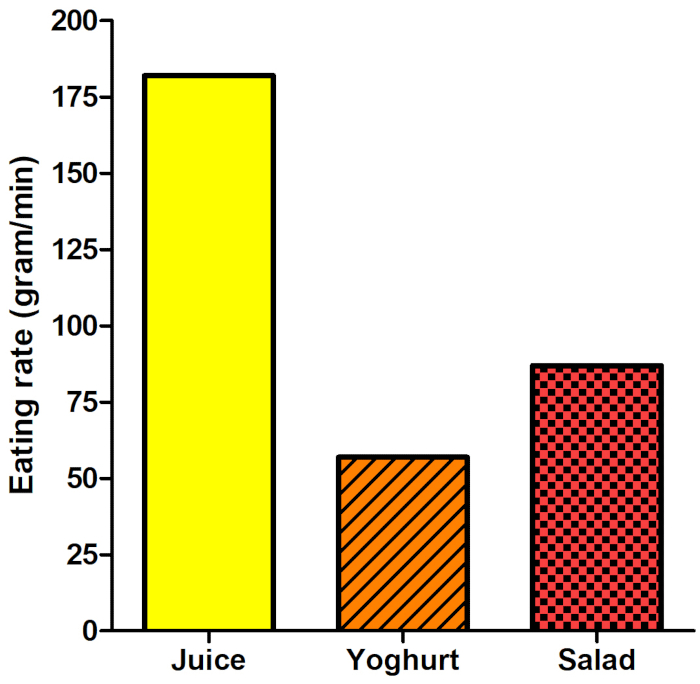

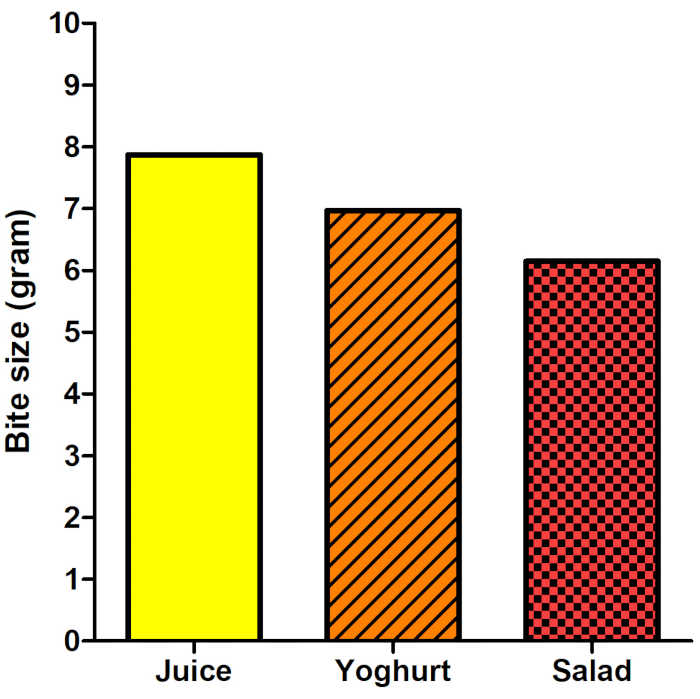

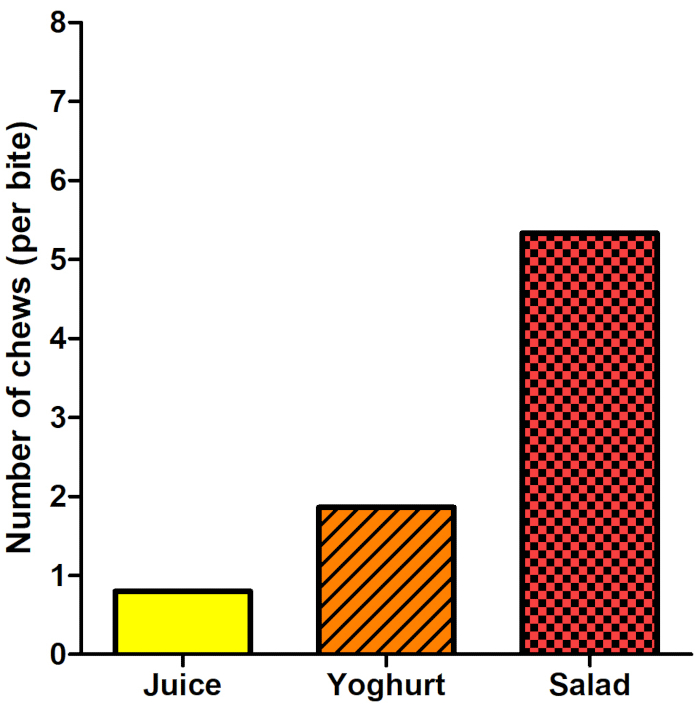

- To determine eating rate (g/sec) and bite size (g/bite) changes over the course of the meal, manually integrate the tray weight data and the eating behavior (Figure 6, Figure 7, Figure 8, Figure 9, Figure 10).

Representative Results

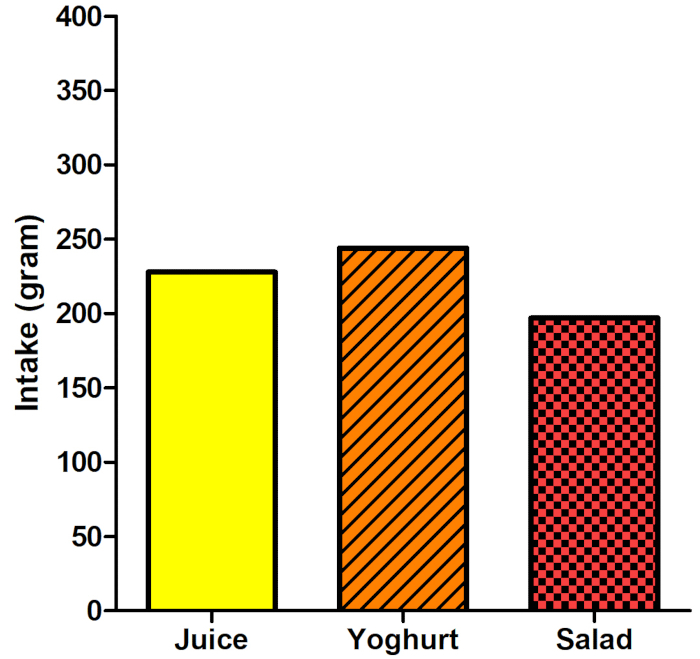

A slower ingestion rate (Figure 7), smaller sip/bite sizes (Figure 8), and more chews (Figure 9) led to lower intake of the salad compared to the yoghurt and juice (Figure 6) as measured by the mEETr tray. The participants ate 17% less of the fruit salad compared to the fruit juice. All the eating behavior characteristics differed between the juice, yoghurt, and salad (Figure 7, Figure 8, Figure 9). The participants chewed significantly more on the fruit salad compared to the yoghurt and juice. The observed number of chews differed by a factor of three between the yoghurt and fruit salad. Additionally, bite size was the smallest for the salad 6.5 g per bite compared to the juice: 8 g per sip. Overall, the number of chews, the bite size, and the eating rate seemed to affect the amount that was eaten during the meal in an eating lab setting. These findings are in accordance with other studies that report that an increased oral processing time (higher number of chews, smaller bite sizes) decreases the food intake12,13,14,15.

Figure 1: Picture of the tray below with the three weighing stations, and the three pressure sensors on the print plate above. Please click here to view a larger version of this figure.

Figure 2: The mEETr set-up with the food items tested. Please click here to view a larger version of this figure.

Figure 3: Automated detection of the eating behavior using fixed points on the face (eyes and mouth). Please click here to view a larger version of this figure.

Figure 4: Dashboard visualizing the incoming data of the three weighing scales of the tray as well as incoming video data. Please click here to view a larger version of this figure.

Figure 5: Data collection overview. The decrease in the food weight on the three weighing scales during the course of the meal is shown by the three upper graphs; peaks are caused by the pressure of cutlery. Bites and sips (including duration) and number of chews are shown in the last row by the colored horizontal bars. Please click here to view a larger version of this figure.

Figure 6: Food intake (g) per product as measured with the mEETr tray. Please click here to view a larger version of this figure.

Figure 7: Eating rate (g/min) per product based on the mEETr tray and automated eating behavior video analysis. Please click here to view a larger version of this figure.

Figure 8: Average bite size (g) per product based on the mEETr tray and automated eating behavior video analysis. Please click here to view a larger version of this figure.

Figure 9: Total number of chews per product based on the mEETr tray and automated eating behavior video analysis. Please click here to view a larger version of this figure.

Figure 10: Raw data output of a measurement, including the three weighing platforms, behavior, and time stamps. Please click here to view a larger version of this figure.

Discussion

A healthy diet and a healthy eating behavior have shown to play a key role in the prevention of and solution to overweight and obesity11. However, many of the methods used to measure the dietary intake and the eating behavior are burdensome for users, researchers, and health-care professionals and may be biased as they are dependent on memory and portion size estimations. Using the mEETr, independently or alongside conventional video and dietary assessment methods, would decrease the effort and the accuracy and precision of dietary intake and eating behavior assessment.

Before the mEETr can be used, a few critical steps need to be addressed. One basic methodological consideration is privacy related to the facial analysis of recorded video. Anonymity for participants is an integral feature of ethical research. However, with the facial analysis being a part of the mEETr, anonymity is nearly impossible16. Thus, the use of mEETr in a research setting requires extensive provisions concerning data safety and warrants attention in the informed consent and other participant documentation. Ultimately, in an upgraded version of the mEETr facial recordings are processed real-time within the device without storing video data. Consequently, no storage of the facial recordings would be warranted, which would allow anonymous data collection.

Another critical step in the protocol is that in this version of the mEETr all data streams are independent which requires the integration of the measures of the video analyses and the three different scales during post-processing. The analysis of data is performed simply on the basis of the weighing station; it is then up to the researcher to couple the data post-hoc to what was served in that specific bowl or on that specific plate or in the cup location. To prevent mixing data streams, the structure of the tray enforces that the bowl, plate, or cup can only be placed at specific locations due to rings which fit the specific piece of tableware.

Eventually, the immediate integration of the data streams has to take place, which would serve as an additional validation measure for real-time decision making with weight change in the bowl/plate/cup validating the bite or sip and vice versa, and as such allowing automated feedback on eating behavior immediately after the meal.

In addition to these critical steps, data monitoring and troubleshooting or error-catching should be simplified, which could be achieved with the help of the following modifications: (1) Automization of the start-up of the system, (2) Integration of quality indicators on the dashboard that provide the information on video quality for automated eating behavior detection, (3) Lower memory requirements for the laptop, (4) Automated event detection that prevents the measurement attempts during non-eating times.

On top of these areas for improvement, there are some challenges when using mEETr that warrant attention. First, the following versions of the mEETr should be made waterproof such that they can be cleaned in a dishwasher. Second, to obtain valid measures of the eating behavior, the participant needs to adhere to various restrictions and rules. For valid use of the mEETr, it is critical that the video is uninterrupted, and that the user looks straight into the camera while chewing. Additionally, for the algorithm to detect chews and swallows, the user needs to be 1) fully visible in the frame including shoulders and hands 2) have no shadows on the face; standardization of light is needed. These prerequisites disturb the natural or the normal eating behavior. As eating is an inherently social affair under normal living conditions, having these restrictions in place does not mesh effortlessly with normal social eating behavior. Thus, the accurate use of the mEETr, for now, requires a non-conventional way of eating. Alterations to the algorithm need to be made in the future to have a more robust measurement that does not require the participant to adhere to certain eating rules or restrictions. In general, the use of mEETr may create user reactivity, resulting in altered food intake due to awareness of what is eaten using the mEETr tray. This may be prevented when the built-in weighing stations are completely hidden, and a vis-eye camera is used that is incorporated within the tray such that a standard face height is not required. The current version of the mEEtr is therefore only suited for lab-based studies. Due to the restrictions and rules required by this technique, the results do not directly translate to free-living eating patterns.

Next to these alterations, there are two functional extensions of the current system that need to be incorporated in the future. First, additional hyperspectral camera technology needs to be added to the mEETr to analyze macronutrient content of the food items on the plate. This would circumvent the need of knowledge of the exact food item that is on the plate while still allowing caloric intake measurements per meal. Second, a machine learning approach to automatically recognize food items can be attached to the current video analysis, allowing further automation of the system. To further increase the meal recognition, a second camera could be added which solely focuses on the food and drinks on the plates.

Ideally, the mEETr tray and camera can be linked to the existing dietary app ecosystem, which would allow direct input of the mEETr results into dietary apps and dieticians2. Based on the information collected by mEETr, immediate feedback and advice can be given to the consumer or patient considering (macro)nutrient intake and eating behavior (food texture and eating rate). This would enable the user to optimize their diet and eating behavior to create a healthy lifestyle.

Divulgations

The authors have nothing to disclose.

Acknowledgements

We thank J. M. C. D. Meijer of theTechnical Development Studio of Wageningen University and Research for his help in the development of the mEETr tray. This research was funded by the 4 Dutch Technical Universities, 4TU- Pride and Prejudice project.

Materials

| Battery | na | na | Battery pack (LiPo) and charge electronics via an USB port connector. No data from this port. |

| Connector program | Noldus | Noldus Information technology software dashboard nview | |

| Dinner tray | na | na | Standard dinner tray from glass inforced epoxy |

| Larger scale | na | na | One high range custom made scale based on a triple force sensor method. |

| Mainboard | na | na | A mainboard converting the three scale measurements to calibrated weight numbers. This board also contains the low power short range RF transmitter. |

| OS Windows | Microsoft | windows 10 Pro 64 bit | |

| Processor program | Noldus | Noldus Information technology software FaceReader | |

| Receiver program | Noldus | Noldus Information technology software Observer | |

| RF receiver | na | na | Custom build USB converter connected to a RF receiver. This receiver has a squelch setting for making it low range sensitive. |

| Small scales | na | na | Two low range custom made scales based on a triple force sensor method. |

References

- Burrows, T. L., Ho, Y. Y., Rollo, M. E., Collins, C. E. Validity of dietary assessment methods when compared to the method of doubly labeled water: A systematic review in adults. Frontiers in Endocrinology. 10, 850 (2019).

- Brouwer-Brolsma, E. M., et al. Dietary intake assessment: From traditional paper-pencil questionnaires to technology-based tools. Environmental software systems. Data science in action. Advances in Information and Communication Technology. 554, (2020).

- Palese, A., et al. What nursing home environment can maximise eating independence among residents with cognitive impairment? Findings from a secondary analysis. Geriatric Nursing. 41 (6), 709-716 (2020).

- Krop, E. M., et al. Influence of oral processing on appetite and food intake – A systematic review and meta-analysis. Appetite. 125, 253-269 (2018).

- Nicolas, E., Veyrune, J. L., Lassauzay, C., Peyron, M. A., Hennequin, M. Validation of video versus electromyography for chewing evaluation of the elderly wearing a complete denture. Journal of Oral Rehabilitation. 34 (8), 566-571 (2007).

- Sabin, M. A., et al. A novel treatment for childhood obesity using Mandometer® technology. International Journal of Obesity. 7, (2006).

- Hermsen, S., et al. Evaluation of a Smart fork to decelerate eating rate. Journal of the Academy of Nutrition and Dietetics. 116 (7), 1066-1068 (2016).

- vanden Boer, J., et al. The splendid eating detection sensor: Development and feasibility study. JMIR mHealth and uHealth. 6 (9), 170 (2018).

- Papapanagiotou, V., et al. A novel chewing detection system based on ppg, audio, and accelerometry. IEEE Journal of Biomedical and Health Informatics. 21 (3), 607-618 (2016).

- Ioakimidis, I., et al. Description of chewing and food intake over the course of a meal. Physiology & Behavior. 104 (5), 761-769 (2011).

- Ruiz, L. D., Zuelch, M. L., Dimitratos, S. M., Scherr, R. E. Adolescent obesity: Diet quality, psychosocial health, and cardiometabolic risk factors. Nutrients. 12 (1), 43 (2020).

- Forde, C. G., van Kuijk, N., Thaler, T., de Graaf, C., Martin, N. Oral processing characteristics of solid savoury meal components, and relationship with food composition, sensory attributes and expected satiation. Appetite. 60 (1), 208-219 (2013).

- Weijzen, P. L. G., Smeets, P. A. M., de Graaf, C. Sip size of orangeade: effects on intake and sensory-specific satiation. British Journal of Nutrition. 102 (07), 1091-1097 (2009).

- Zijlstra, N., de Wijk, R. A., Mars, M., Stafleu, A., de Graaf, C. Effect of bite size and oral processing time of a semisolid food on satiation. The American Journal of Clinical Nutrition. 90 (2), 269-275 (2009).

- Bolhuis, D. P., et al. Slow food: sustained impact of harder foods on the reduction in energy intake over the course of the day. PloS One. 9 (4), 93370 (2014).

- Grinyer, A. The anonymity of research participants: assumptions, ethics and practicalities. Social Research Update. 36 (1), 4 (2002).