Exploring the Use of Isolated Expressions and Film Clips to Evaluate Emotion Recognition by People with Traumatic Brain Injury

Summary

This paper describes how to implement a battery of behavioral tasks to examine emotion recognition of isolated facial and vocal emotion expressions, and a novel task using commercial television and film clips to assess multimodal emotion recognition that includes contextual cues.

Abstract

The current study presented 60 people with traumatic brain injury (TBI) and 60 controls with isolated facial emotion expressions, isolated vocal emotion expressions, and multimodal (i.e., film clips) stimuli that included contextual cues. All stimuli were presented via computer. Participants were required to indicate how the person in each stimulus was feeling using a forced-choice format. Additionally, for the film clips, participants had to indicate how they felt in response to the stimulus, and the level of intensity with which they experienced that emotion.

Introduction

Traumatic brain injury (TBI) affects approximately 10 million people each year across the world 1. Following TBI, the ability to recognize emotion in others using nonverbal cues such as facial and vocal expressions (i.e., tone of voice), and contextual cues, is often significantly compromised 2-7. Since successful interpersonal interactions and quality relationships are at least partially dependent on accurate interpretation of others' emotions7-10, it is not surprising that difficulty with emotion recognition has been reported to contribute to the poor social outcomes commonly reported following TBI3,8,11,12.

Studies investigating emotion recognition by people with TBI have tended to focus on isolated cues, particularly recognition of facial emotion expressions using static photographs2. While this work has been important in informing the development of treatment programs, it does not adequately represent one's ability to recognize and interpret nonverbal cues of emotion in everyday interactions. Not only do static images provide increased time to interpret the emotion portrayed, they also typically only portray the apex (i.e., maximum representation) of the expressions13-16. Additionally, static images lack the temporal cues that occur with movement12,13,15, and are not representative of the quickly changing facial expressions of emotion encountered in everyday situations. Further, there is evidence to indicate that static and dynamic visual expressions are processed in different areas of the brain, with more accurate responses to dynamic stimuli17-24.

Vocal emotion recognition by people with TBI has also been studied, both with and without meaningful verbal content. It has been suggested that the presence of verbal content increases perceptual demands because accurate interpretation requires simultaneous processing of the nonverbal tone of voice with the semantic content included in the verbal message25. While many studies have shown improved recognition of vocals affect in the absence of meaningful verbal content, Dimoska et al. 25 found no difference in performance. They argue that people with TBI have a bias toward semantic content in affective sentences; so even when the content is semantically neutral or eliminated, they continue to focus on the verbal information in the sentence. Thus, their results did not clearly show whether meaningful verbal content helps or hinders vocal emotion expression.

In addition to the meaningful verbal content that can accompany the nonverbal vocal cues, context is also provided through the social situation itself. Situational context provides background information about the circumstances under which the emotion expression was produced and can thus greatly influence the interpretation of the expression. When evaluating how someone is feeling, we use context to determine if the situation is consistent with that person's wants and expectations. This ultimately affects how someone feels, so knowledge of the situational context should result in more accurate inferencing of others' emotion26. The ability to do this is referred to as theory of mind, a construct found to be significantly impaired following TBI6,27-31. Milders et al. 8,31 report that people with TBI have significant difficulty making accurate judgments about and understanding the intentions and feelings of characters in brief stories.

While these studies have highlighted deficits in nonverbal and situational emotion processing, it is difficult to generalize the results to everyday social interactions where these cues occur alongside one another and within a social context. To better understand how people with TBI interpret emotion in everyday social interactions, we need to use stimuli that are multimodal in nature. Only two previous studies have included multimodal stimuli as part of their investigation into how people with TBI process emotion cues. McDonald and Saunders15 and Williams and Wood16 extracted stimuli from the Emotion Evaluation Test (EET), which is part of The Awareness of Social Inference Test26. The EET consists of videotaped vignettes that show male and female actors portraying nonverbal emotion cues while engaging in conversation. The verbal content in the conversations is semantically neutral; cues regarding how the actors are feeling and contextual information are not provided. Thus, the need to process and integrate meaningful verbal content while simultaneously doing this with the nonverbal facial and vocal cues was eliminated. Results of these studies indicated that people with TBI were significantly less accurate than controls in their ability to identify emotion from multimodal stimuli. Since neither study considered the influence of semantic or contextual information on interpretation of emotion expressions, it remains unclear whether the addition of this type of information would facilitate processing because of increased intersensory redundancy or negatively affect perception because of increased cognitive demands.

This article outlines a set of tasks used to compare perception of facial and vocal emotional cues in isolation, and perception of these cues occurring simultaneously within meaningful situational context. The isolations tasks are part of a larger test of nonverbal emotion recognition — The Diagnostic Analysis of Nonverbal Accuracy 2 (DANVA2)32. In this protocol, we used the Adult Faces (DANVA-Faces) and Adult Paralanguage (DANVA-Voices) subtests. Each of these subtests includes 24 stimuli, depicting six representations each of happy, sad, angry, and fearful emotions. The DANVA2 requires participants to identify the emotion portrayed using a forced-choice format (4 choices). Here, a fifth option (I don't know) is provided for both subtests. The amount of time each facial expression was shown was increased from two seconds to five seconds to ensure that we were assessing affect recognition and not speed of processing. We did not alter the adult paralanguage subtest beyond adding the additional choice in the forced-choice response format. Participants heard each sentence one time only. Feedback was not provided in either task.

Film clips were used to assess perception of facial and vocal emotion cues occurring simultaneously within situational context. While the verbal content within these clips did not explicitly state what the characters in the clips were feeling, it was meaningful to the situational context. Fifteen clips extracted from commercial movies and television series were included in the study, each ranging from 45 to 103 (mean = 71.87) seconds. Feedback was not provided to participants during this task. There were three clips representing each of the following emotion categories: happy, sad, angry, fearful, neutral. These clips were chosen based on results of a study conducted with young adults (n = 70) Zupan, B. & Babbage, D. R. Emotion elicitation stimuli from film clips and narrative text. Manuscript submitted to J Soc Psyc. In that study, participants were presented with six film clips for each of the target emotion categories, and six neutral film clips, ranging from 24 to 127 seconds (mean = 77.3 seconds). While the goal was to include relatively short film clips, each clip chosen for inclusion needed to have sufficient contextual information that it could be understood by viewers without having additional knowledge of the film from which it was taken33.

The clips selected for the current study had been correctly identified in the Zupan and Babbage study Emotion elicitation stimuli from film clips and narrative text, cited above as the target emotion between 83 and 89% for happy, 86 – 93% for sad, 63 – 93% for angry, 77 – 96% for fearful, and 81 – 87% for neutral. While having the same number of exemplars of each emotion category as was in the DANVA2 tasks (n = 6) would have been ideal, we opted for only three due to the increased length of the film clip stimuli compared to stimuli in the DANVA-Faces and DANVA-Voices tasks.

Data from these tasks were collected from 60 participants with TBI and 60 age and gender matched controls. Participants were seen either individually or in small groups (max = 3) and the order of the three tasks was randomized across testing sessions.

Protocol

This protocol was approved by the institutional review boards at Brock University and at Carolinas Rehabilitation.

1. Prior to Testing

- Create 3 separate lists for the 15 film clips, each listing the clips in a different restricted order.

- Ensure that each list begins and ends with a neutral stimulus and that no two clips that target the same emotion occur consecutively.

- Create a separate folder on the desktop for each list of film order presentation and label the folder with the Order name (e.g., Order 1).

- Save the 15 clips into each of the three folders.

- Re-label each film clip so it reflects the presentation number and gives no clues regarding the target emotion (i.e., 01; 02; 03; etc.).

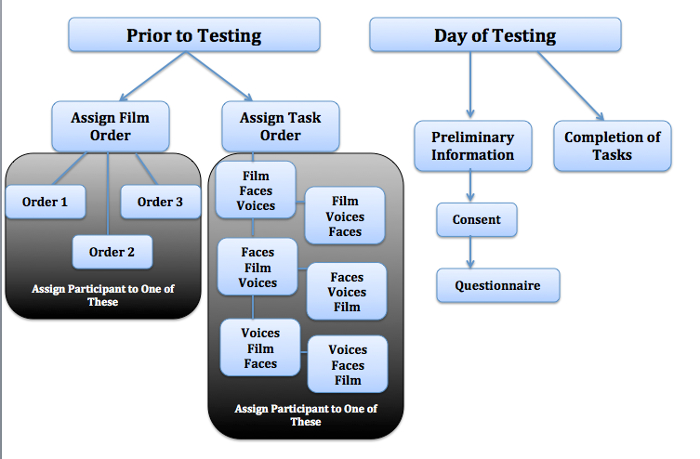

- Create six randomized orders of task (DANVA-Faces, DANVA-Voices, Film Clips) presentation (see Figure 1).

- Assign the incoming participant to one of the three restricted orders of film clips presentation.

- Assign the incoming participant to one of the six randomized orders of task presentation.

2. Day of Testing

- Bring participant(s) into the lab and seat them comfortably at the table.

- Review the consent form with the participant by reading each section with him/her.

- Answer any questions the participant may have about the consent form and have him/her sign it.

- Have participants complete the brief demographic questionnaire (Date of birth; gender). Have participants with TBI additionally complete the section of the questionnaire on relevant medical history questionnaire (date of injury, cause of injury, injury severity).

NOTE: When participants are completing the questionnaire, inquire about any known visual processing difficulties so you may seat participants with TBI accordingly - Position the participant in front of the computer in a chair. Ensure that all participants (if more than one) have a clear view of the screen.

3. DANVA-Faces Task

- Give each participant a clip board with the DANVA-Faces response sheet attached and a pen to circle responses.

- Provide the participant the following instructions: "After you see each item, circle your response (happy, sad, angry, fearful, or neutral) on the line that corresponds to the item number shown on each slide."

NOTE: If participants with TBI have indicated visual processing difficulties or fine motor difficulties are evident, provide the participant with the alternate response page. This page lists the five responses in larger text in landscape format. - Open the DANVA-Faces task in a presentation and play it using the Slide Show View.

- Give participants the following instructions: "For this activity, I am going to show you some peoples' faces and I want you to tell me how they feel. I want you to tell me if they are happy, sad, angry or fearful. Fearful is the same thing as afraid or scared. If you are unsure of the emotion, choose the 'I don't know' response. There are 24 faces altogether. Each face will be on the screen for five seconds. You must answer before the face disappears for your answer to count. Indicate your answer by circling it on the sheet in front of you".

NOTE: If the participant is unable to circle his/her own responses and is participating in in the study as part of a small group, provide the following instruction: "Indicate your answer by pointing to it on the sheet in front of you". The examiner will then record the participant's response on the DANVA-Faces response sheet. - Ensure the participants do not have any questions before starting.

- Start the task with the two practice trials. Give participants the following instructions: "We are going to complete two practice trials so that you get a sense of how long each face will appear on the screen, and how long you have to provide your answer".

- Hit enter on the keyboard when the face disappears to move to the next stimulus. Ensure all participants are looking up at the screen before doing so.

- Ensure that the participants do not have questions. If none, begin the test trials. Ensure all participants are looking at the screen. Hit enter to start the test stimuli.

- Continue the procedure outlined for the practice tasks until the 24 stimuli are complete.

- Collect the participant response sheets.

4. DANVA-Voices Task

- Give each participant a clip board with the DANVA-Voices response sheet attached and a pen to circle responses.

NOTE: If participants with TBI have indicated visual processing difficulties or fine motor difficulties are evident, provide the participant with the alternate response page. This page lists the five responses in larger text in landscape format. - Open the DANVA-Voices task by going to the following website:

http://www.psychology.emory.edu/clinical/interpersonal/danva.htm - Click on the link for Adult faces, voices, and postures.

- Fill in the login and password by entering any letter for the login and typing EMORYDANVA2 for the password.

- Click Continue.

- Click on Voices (green circle in the center of the screen).

- Test the volume level. Provide the following instructions: "I want to be sure that you can hear the sound on the computer and that it is at a comfortable volume for you. I am going to click test sound. Please tell me if you can hear the sound, and also if you need the sound to be louder or more quiet to be comfortable for you."

- Click test sound.

- Adjust the volume according to the participant's request (increase/decrease).

- Click 'test sound' again. Adjust the volume according to the participant's request. Do this until the participant reports it is at a comfortable volume.

- Review the task instructions with the participant. Give participants the following instructions: For this activity, you are going to hear someone say the sentence 'I'm going out of the room now, but I'll be back later.' I want you to tell me if they are happy, sad, angry or fearful. Fearful is the same thing as afraid or scared. If you are unsure of the emotion, choose the 'I don't know' response. There are 24 sentences. Before each sentence is spoken, a number will be announced. You need to listen to the sentence that follows. The sentences will not be repeated so you need to listen carefully. Tell me how the person is feeling by circling your answer on the sheet in front of you". Once you have made your selection, I need to select a response on the computer screen to move to the next item. I will always select the same emotion (fearful). My selection does not indicate the correct answer in anyway."

NOTE: If the participant is unable to circle his/her own responses and is participating in in the study as part of a small group, provide the following instruction: "Indicate your answer by pointing to it on the sheet in front of you". The examiner will then record the participant's response on the DANVA-Voices response sheet. - Click 'continue'.

- Direct participants to circle (or point to) their answer on their response sheet when the sentence has played.

- Click 'fearful'. Click 'next'.

- Continue this procedure until the 24 sentences are complete.

- Exit the website when you reach the end of the task.

- Collect the participants' response sheets.

5. Film Clip Task

- Seat participants comfortably in front of the computer. Ensure that the screen can be fully seen by all.

- Give each person a clipboard with a copy of the Emotional Film Clip Response sheet.

NOTE: If participants with TBI have indicated visual processing difficulties or fine motor difficulties are evident, provide the participant with two alternate response pages. The first page lists the five forced-choice responses and the second provides the 0-9 scale. Both response pages are in larger text and in landscape format. - Open the folder on the computer for the assigned order of film clip presentation.

- Provide participants with the following directions: "You are going to view a total of 15 film clips. In each film clip, you are going to see a character who looks like they are feeling a certain emotion. They might look 'happy', 'sad', 'angry', 'fearful', or 'neutral'. First, I want you to tell me what emotion the character is showing. Then tell me what emotion you experienced while watching the film clip. Finally, tell me the number that best describes the intensity of the emotion you felt while watching the clip. For example, if the person's face made you feel 'mildly' happy or sad, circle a number ranging from 1 to 3. If you felt 'moderately' happy or sad, circle a number ranging from 4 to 6. If you felt 'very' happy or sad, circle a number ranging from 7 to 9. Only circle 0 if you did not feel any emotion at all. Do you have any questions?"

NOTE: Only the first question (Tell me what emotion the character is showing) was analyzed for the current study. - Double click 01 to open the first film clip.

- Tell the participant which character to focus on while viewing the clip.

- Select 'View Full Screen' in the options menu to play the clip.

- Direct the participants to respond to line a (What emotion is the main character experiencing?) on their response sheet when the clip has finished playing.

- Direct the participants to respond to line b (Choose one emotion that best describes how you felt while watching the clip) on the response sheet once line a has been answered.

- Direct the participant to respond to line c (How would you rate the intensity of the emotion you experienced while watching the clip?) once line b has been answered.

- Direct the participant to re-direct his/her attention to the computer screen once the responses are complete.

- Return to the folder on the computer that contains the film clips in the assigned order for the participant.

- Double cck on 02 to open the second film clip. Repeat Steps 5.5 to 5.12 until the participant has viewed all 15 film clips.

- Collect the response sheet from the participant(s).

6. Moving from One Task to Another (Optional Step)

- Provide participants the option to take a break between Tasks 2 and 3.

7. Scoring the Tasks

- Refer to the answer key on page 25 of the DANVA2 manual32 to score the DANVA-Faces task.

- Score items that match the answer key as 1 and incorrect items as 0. 'I don't know' responses are scored as 0.

- Calculate a raw score by adding the total number of correct responses. Divide the raw score by 24 (total items) to obtain a percentage accuracy score.

- Refer to the answer key on page 27 of the DANVA2 manual32 and repeat steps 7.2 and 7.3 to score the DANVA-Voices task.

- Refer to the answer key for assigned order of film clip presentation for the participant. Score a 1 if the participant identified the target emotion when responding to the question about what emotion the character was showing, and 0 if the participant did not select the target emotion (including if the participant said 'I don't know').

- Calculate a raw score by adding the number of correct responses. Divide the raw score by 15 (total items) to obtain a percentage accuracy score.

- Create a subset score for the Film Clip task that contains only responses to the happy, sad, angry, and fearful film clips by adding correct responses to only these 12 clips. Divide the number of correct responses by 12 to obtain a percentage accuracy score.

- Create a score for responses to neutral film clips by adding the number of correct responses to the neutral clips. Divide the number of correct responses by 3 to obtain a percentage accuracy score.

NOTE: A subset score needs to be created because the DANVA tasks did not include portrayals of Neutral while the Film Clips task did. - Calculate a score for responses to positively valenced items (i.e., happy) and negatively valenced items (combine responses to angry, fearful and sad stimuli) for all three tasks.

- Divide the score for positively valenced items for each task by 3 to obtain percentage accuracy score.

- Divide the score for negatively valenced items for each task by 9 to obtain percentage accuracy scores.

8. Data Analysis

- Conduct a mixed model analyses of variance (ANOVA) to examine responses to isolated facial emotion stimuli, isolated vocal emotion stimuli, and multimodal film clip stimuli by participants with TBI and Control participants.

- Explore main effects found in the ANOVA using follow-up univariate comparisons.

Representative Results

This task battery was used to compare emotion recognition for isolated emotion expressions (i.e., face-only; voice-only) and combined emotion expressions (i.e., face and voice) that occur within a situational context. A total of 60 (37 males and 23 females) participants with moderate to severe TBI between the ages of 21 and 63 years (mean = 40.98) and 60 (38 males and 22 females) age -matched Controls (range = 18 to 63; mean = 40.64) completed the three tasks. To participate in the study, participants with a TBI needed to have either a Glasgow coma scale score, posttraumatic amnesia or loss of conscious that was indicative of a moderate to severe TBI. The average time since injury was 13.68 years and the most common cause of injury was a motor vehicle accident (72%). Participants with a TBI had completed an average of 14.43 years of education and control participants 15.72 years of education. Participants with TBI were excluded from the study if they had a developmental affective disorder, acquired neurological disorder, major psychiatric disorder or impaired vision or hearing that would inhibit full participation in study tasks. Control participants were excluded if they reported any history of TBI of any severity, including concussion resulting in postconcussive syndrome. All participants spoke English as their primary language.

To ensure there were no task order effects, we assigned participants to one of three restricted randomized orders of film clips and one of six task orders (see Figure 1). Film and task order were pre-determined using an on-line computer randomized number generator. Neither film nor task order were shown to effect performance and thus were not used as covariates in analyses.

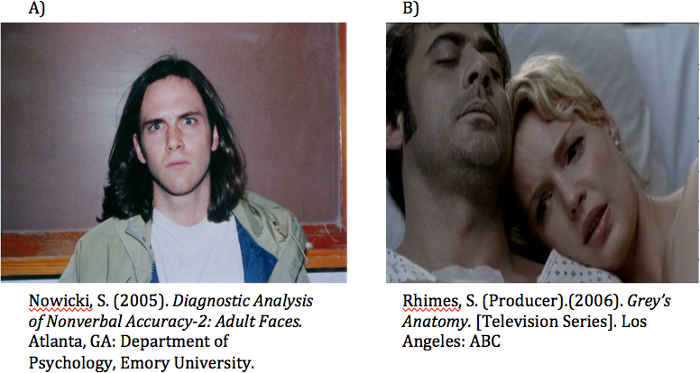

Figure 2 includes a pictorial example of a stimulus from the DANVA-Faces task and the Film Clips task. The benefits and challenges of using the DANVA2 and Film Clip stimuli are examined in the discussion.

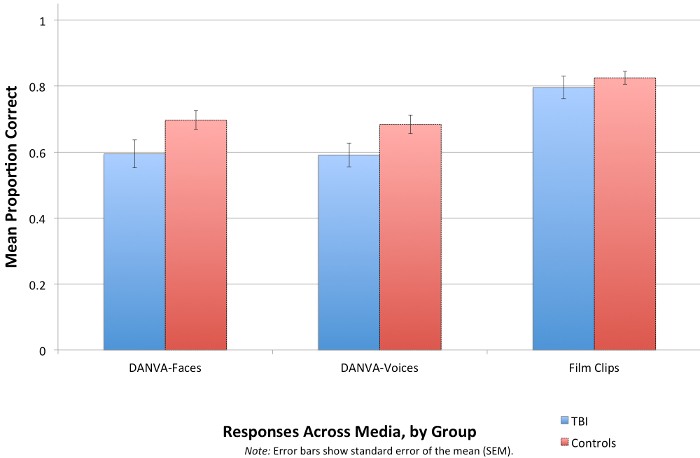

An ANOVA examining group differences across the three affect recognition tasks found significant main effects for group [F(1, 100) = 9.32; p = 0.003] and task [F(2, 200) = 11.05, p <0.001]. As hypothesized, people with TBI were significantly less accurate than Controls for performance on the DANVA-Faces task, F(1, 101) = 6.77; p = 0.005, and the DANVA-Voices task, F(1, 100) = 9.64, p = 0.002 (see Figure 3). It was surprising to find that recognition of emotion in the film clips task did not significantly differ between the two groups, F(1, 118) = 2.12, p = 0.15. We had anticipated that the multimodal nature of the film clips would increase the cognitive demand of the task, making it more difficult for people with TBI to recognize emotion in the film clips compared to Controls. This was not the case. It appears that the multimodal cues increased redundancy, allowing for people with TBI to recognize emotion more easily than they could the isolated expressions.

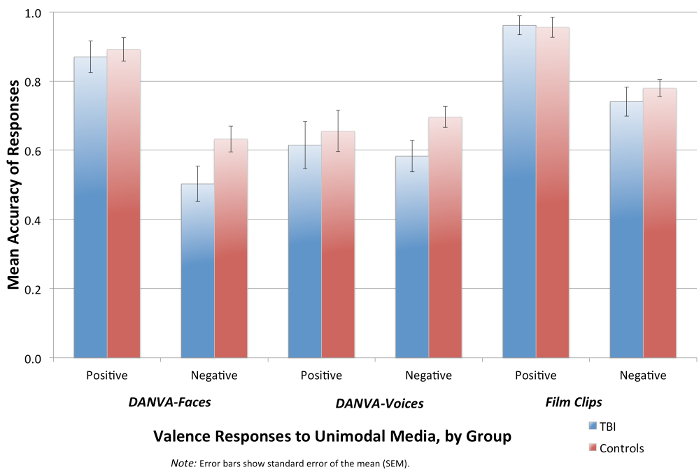

Similar to previous work in this area, people with TBI found it significantly more challenging to recognize negative emotion expressions than positive ones for both the DANVA-Faces [F(1, 101) = 6.8, p = 0.01] and the DANVA-Voices [DANVA-Voices; F(1,100) = 10.85, p = 0.001] tasks (see Figure 4).

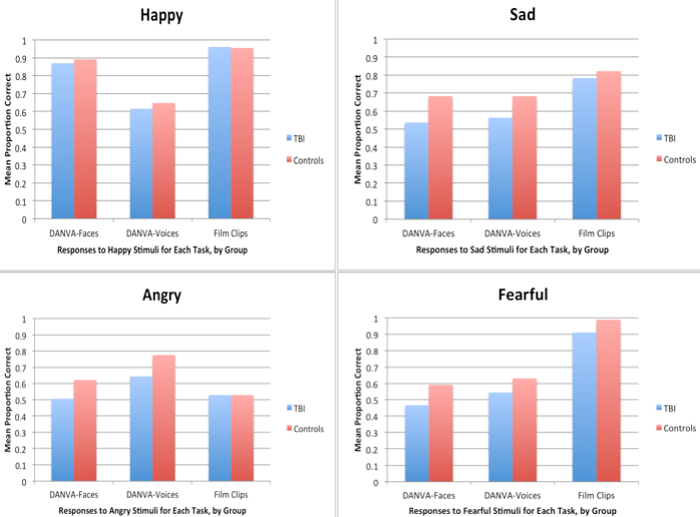

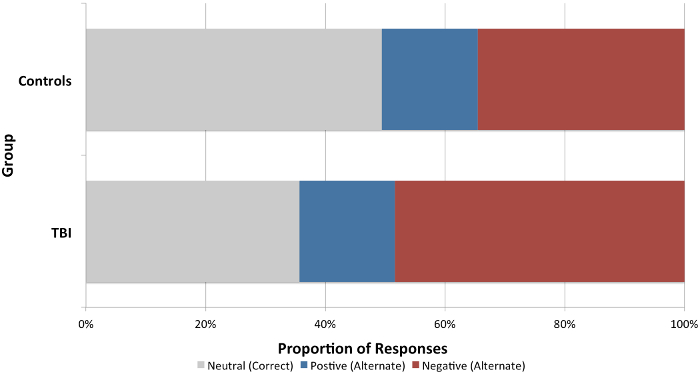

While studies in this area have reported valence effects, few studies have examined responses to the target emotions. We analyzed responses for happy, sad, fearful, and angry stimuli for all three tasks (see Figure 5) and additionally analyzed responses to the neutral film clips (see Figure 6). Although the valence results indicated that people with TBI generally found negatively valenced stimuli more challenging to identify than people without TBI, there was a desire to investigate whether a particular emotion (e.g., angry) was driving this result. Since happy was our only positively valenced emotion, our results here mirrored those for valence. When examining responses to the negatively valenced emotions (sad, angry, fearful), results showed that people with TBI found fearful to be the most difficult emotion to identify. In fact, this was the only between group response difference found in the Film Clip task. Identifying neutral was significantly more difficult for people with TBI than Controls, F(1, 118) = 6.68, p = 0.001. When neutral was incorrectly identified, people with TBI were significantly more likely to provide a negatively valenced response than Controls, F(2,236) = 4.75, p = 0.009.

Figure 1. Schematic representation of protocol. Prior to testing, participants were assigned to one of three presentation orders for the film clips. There were also assigned to one of six randomized orders of task completion. On the day of testing, participants provided preliminary information before completing the experimental tasks [Film clips, DANVA-Faces (Faces), and DANVA-Voices (Voices)]. Please click here to view a larger version of this figure.

Figure 2. Examples of Stimuli Used. A) An example of the isolated facial stimuli in the DANVA-Faces task. All stimuli in this task are static photographs with the same background. B) A screenshot of one film clip in the Film Clips task. All film clips were taken from commercial movies and television and ranged from 45 – 103 milliseconds in length. Please click here to view a larger version of this figure.

Figure 3. Responses across Media, by Group. Responses for each task type by people with and without TBI. Participants with TBI were significantly less accurate than Controls for recognition of static facial expressions of emotion [DANVA-Faces; F(1, 101) = 6.77; p = 0.005], and vocal emotion expressions [DANVA-Voices; F(1, 100) = 9.64, p = 0.002]. The difference of recognition of emotion for the context-enriched film clips was not significant [F(1, 118) = 2.12, p = 0.15]. Error bars show standard error of the mean (SEM). Please click here to view a larger version of this figure.

Figure 4. Valence Responses across Media, by Group. Responses to positive versus negative stimuli across tasks. People with TBI were able to recognize positive emotion expressions similarly to Controls but had significantly more difficulty recognizing negative facial emotion expressions [DANVA-Faces; F(1, 101) = 6.8, p = 0.01] and negative vocal emotion expressions [DANVA-Voices; F(1,100) = 10.85, p = 0.001]. Error bars show standard error of the mean (SEM). Please click here to view a larger version of this figure.

Figure 5. Responses for Each Emotion Category across Media, by Group. This figure shows responses to each of the target emotions for people with TBI and Controls for the DANVA-Faces, DANVA-Voices, and Film Clips tasks. People with TBI performed similarly to Controls for Happy, regardless of the stimulus type. For sad, angry, and fearful, people with TBI were significantly less accurate than Controls for DANVA-Faces and DANVA-Voices tasks. People with TBI also had significantly more difficulty identifying fearful than Controls in Film Clips. Please click here to view a larger version of this figure.

Figure 6. Responses to Neutral Stimuli in the Film Clips Task, by Group. People with TBI had significantly more difficulty labeling neutral film clips as neutral than Controls, F(1, 118) = 6.68, p = 0.001. When neutral was incorrectly identified, people with TBI were also significantly more likely to provide a negatively valenced response instead than Controls, F(2,236) = 4.75, p = 0.009. Please click here to view a larger version of this figure.

| Emotion | Television Show/Commercial Film | Scene | Length (sec) |

| Happy | Sweet Home Alabama, D & D Films, 2002 | A man surprises his girlfriend with a marriage proposal at a jewelry store. | 76 |

| Director: A. Tennant | |||

| Happy | Wedding Crashers, New Line Cinema, 2005 | A young couple is sitting on the beach playing flirtatious games. | 62 |

| Director: D. Dobkin | |||

| Happy | Remember the Titans, Jerry Bruckheimer Films, 2000 | A black family is accepted into a white community after the high school football team wins the championship game. | 65 |

| Director:B. Yakin | |||

| Sad | Grey’s Anatomy, ABC, 2006 | A father is going into surgery and is only able to communicate with his family by blinking his eyes. | 98 |

| Director: P. Horton | |||

| Sad | Armageddon, Touchstone Pictures, 1998 | A daughter must say goodbye to her father while he is on a dangerous mission. | 75 |

| Director: M. Bay | |||

| Sad | Grey’s Anatomy, ABC, 2006 | A woman is heart broken after her fiancé has passed away. | 87 |

| Director: M. Tinker | |||

| Angry | Anne of Green Gables, Canadian Broadcast Corporation, 1985 | An older woman speaks openly about a child’s physical flaws in front of her. | 62 |

| Director: K. Sullivan | |||

| Canada: Anne of Green Gables Productions | |||

| Angry | Enough, Columbia Pictures, 2002 | A wife confronts her husband about infidelity and he responds by exerting physical and emotional dominance. | 103 |

| Director: M. Apted | |||

| United States: Columbia Pictures | |||

| Angry | Pretty Woman, Touchstone Pictures, 1990 | A call girl attempts to buy clothing from an expensive store and the store clerks turn her away. | 65 |

| Director: G. Marshall | |||

| Fearful | Blood Diamond, Warner Bros Pictures, 2006 | A father and son are walking together when numerous vehicles carrying militia approach. | 50 |

| Director: E. Zwick | |||

| Fearful | The Life Before Her Eyes, 2929 Productions, 2007 | Two teenage girls are talking in a highschool bathroom when they hear gunshots that continue to get closer. | 79 |

| Director: V. Perelman | |||

| Fearful | The Sixth Sense, Barry Mendel Productions, 1999 | A young boy wakes from his sleep and enters the kitchen to find his mother making dinner. | 70 |

| Neutral | The Other Sister, Mandeville Films, 1999 | A mother and daughter are sitting and discussing an art book. | 45 |

| Neutral | The Game, Polygram Filmed Entertainment, 1999 | One gentleman is explaining the rules of a game to another gentleman. | 85 |

| Neutral | The Notebook, New Line Cinema, 2004 | An older gentlemen and his doctor are conversing during a routine check-up. | 56 |

| Director: N. Cassavetes |

Table 1. Description of Film Clips Used.

Discussion

The manuscript describes three tasks used to assess emotion recognition abilities of people with TBI. The goal of the described method was to compare response accuracy for facial and vocal emotional cues in isolation, to perception of these cues occurring simultaneously within meaningful situational context. Film clips were included in the current study because their approximation of everyday situations made them more ecologically valid than isolated representations of emotion expressions. When carrying out this protocol with people with brain injury, it is important to consider the individual's visual scanning skills and ensure that the stimuli and response sheets are fully visible. To assist with this, response sheets were created that consisted of one line for each stimulus, and used alternated color coding for each line (e.g., Line 1 = gray; Line 2 = white; Line 3 = gray; etc.).

The DANVA2 is fully available on-line. However, the on-line version of the DANVA-Faces was not used because it is pre-set to have each face shown for only two seconds. Given the speed of processing issues often experienced by people with TBI, we were concerned that errors made on this version may be more indicative of speed of processing problems, than reflective of facial emotion recognition. Our presentation version of the DANVA-Faces increases the time each stimulus is shown to 5 seconds.

The stimuli used in the current study provided important insight into the emotion recognition abilities of people with TBI. However, there are many ways in which the stimuli may be improved for use in future studies of this nature. The stimuli used to assess recognition of isolated facial emotion expressions were from the DANVA-Faces task of the DANVA2. The benefit of using the DANVA-Faces stimuli is that they are standardized and used previously with people with TBI3,34. The 24 items include both high and low intensity portrayals of emotion by a small group of speakers so participants are exposed to the same speaker producing variable expressions. In addition, the DANVA-Faces task includes colored photographs versus the black and white images often used. Not only are colored images more contemporary, McDonald and Saunders15 argue they are likely less abstract to people with TBI. The primary issue with the stimuli used in the DANVA-Faces task, is that the emotion expressions are static. As previously discussed, static images provide people more time to interpret the emotion being portrayed, lack the temporal cues that occur when the face is moving12,13,15, and are not processed in the same area of the brain as dynamic ones17-23. Thus, using only static facial emotion expressions when assessing emotion recognition does not allow for appropriate generalization to real-life processing. In addition, research has shown that we can identify emotions in photographs more accurately than moving images, so tests using static images may overestimate abilities13,35,36. Therefore, future studies need to include isolated dynamic facial emotion stimuli to more accurately assess how people with TBI interpret emotion portrayed in only the face. These stimuli could potentially be created using the film clips by selecting frames in which the targeted emotion was portrayed, deleting the sound, and cropping the film to focus on only the head and neck of the character. This would eliminate additional contextual cues, including gestural cues and body language.

The vocal emotion expressions used in the current study were also taken from the DANVA2. Similar to the DANVA-Faces task, the DANVA-Voices task includes 24 items of both high and low intensity. Each item on the DANVA-Voices uses the same semantically neutral sentence (I'm going out of the room now, and I'll be back later). The repetition of a single sentence allows listeners to focus on the non-verbal emotion cues (i.e., tone of voice) and ignore the verbal content. This is in contrast to stimuli used in previous studies that included different (semantically neutral) verbal content for each item15. Unlike the results of the current study, McDonald and Saunders15 reported that people with TBI had significant difficulty identifying emotion in isolated vocal emotion expressions when compared to isolated facial emotion expressions. The verbal content of the vocal stimuli used in that study varied for each item, so it is possible that participants were focusing on interpreting the verbal content, which was semantically neutral, and thus, meaningless. This assertion is supported by studies that have shown that people with (and without) TBI are more likely to use verbal content versus the non-verbal acoustic cues to interpret a vocal emotion expression37-39. Future studies should further explore the degree to which people with TBI make use of the nonverbal versus verbal content in isolated vocal expressions to determine whether the semantic information conveyed in the verbal message is more dominant than the emotion conveyed through tone of voice. This could be accomplished by creating stimuli with a limited number of sentences, both meaningful and neutral in verbal content. These sentences could then be combined with either congruent or incongruent nonverbal vocal cues. Sentences that are congruent in verbal and nonverbal emotion content are likely to lead to faster and more accurate identification of the emotion expressed40. However, it is unclear which cue participants will focus on when the verbal and non-verbal content is incongruent, and whether one channel would dominate throughout the task, or if the dominant cue will be dependent on the emotion portrayed through each channel.

Stimuli in the DANVA2 were created by presenting speakers with a short vignette aimed to elicit a target emotion and then instructing those speakers to express that emotion via the face or voice. It has been suggested that such methods lead to exaggerated and stereotypical expressions that may lead to overstated recognition abilities41. The film clips on the other hand included facial and vocal expressions that occurred with situational context, making them more ecologically valid than the use of isolated expressions. It is important to note that while not semantically neutral, the verbal content did not specify the emotional state of the characters in any way. The use of film clips of this nature with people with TBI is novel. While results of the current study suggest that the additional contextual information included in the film clips greatly facilitated emotion recognition, it is possible that participants were influenced by the additional cues present (e.g., body posture) and/or the cinematic design of the clips (e.g., pacing, lighting, and framing)42,43. Participants may also have been influenced by the presence of background music, so results need to be interpreted and generalized carefully. The influence of affective background music should be further investigated in future studies by comparing film clips without and without this cue to ascertain if the presence of congruent background music facilitates emotion recognition. While this information would not necessarily be generalizable to everyday social interactions, it could have important implications for treatment of emotion recognition deficits. It is also possible that participants in the current study were relying on the body posture and gestures of the characters in the film clips to facilitate emotion perception. Again, finding film clips that manipulate the presence and absence of such cues would be important for exploring which cues people with TBI make most use of when interpreting emotions in everyday situations. However, identifying commercial film clips that manipulate each of these extraneous factors to varying degrees poses a significant challenge. Instead, future research might focus on creating high quality film clips within various situational settings show characters engaged in meaningful interactions. These clips could be controlled for the presence and absence of contextual cues, postures, gestures, and background music. In addition, the clips could be used to assess recognition of dynamic isolated facial expressions as described earlier, recognition of isolated vocal emotion expressions, these two cues combined, and then the addition of contextual information. This would provide much needed insight into how people with TBI perceive emotion and might allow for better generalization to real-life settings.

Other methodological considerations for future studies like the one presented here are the number of target emotions and the response format chosen. The current study included four primary emotions: happy, sad, angry, and fearful. Neutral was also evaluated in the film clips task. This selection of target emotions includes only one positive emotion (i.e., happy) making it difficult to compare response accuracy for positive versus negative emotions. Also, such a limited set of emotions makes it easier for participants to rely upon process of elimination when making their selections, a strategy that could not be reliably used in everyday interactions. Future studies should expand upon the number of emotions included and should also include isolated facial and vocal expressions portraying neutral. Further, angry film clips were not well identified by either group, suggesting that the film clips themselves may have been poorly chosen. The average identification rate of the angry film clips in the previous validation study by Zupan and BabbageZupan, B. & Babbage, D. R. Emotion elicitation stimuli from film clips and narrative text. Manuscript submitted to J Soc Psyc was only 73% compared to 85 – 90% for the remaining four categories. Previous studies using film clips also report difficulty selecting angry clips33,44,45. It remains unclear why it is more challenging to select film clips that lead to high identification of angry. It may be because angry is a more complicated emotion that serves a range of social functions (e.g., expressing dislike versus asserting authority), requiring the perceiver to evaluate a situation in terms of its relevance to their own belief systems46.

In addition, future studies should consider whether the valence and intensity of the film clip shown influences participant responses, particularly for neutral clips. While the current study showed no effect of the order in which the 15 film clips were shown to participants, it is still possible that some clips may have led to greater feelings of empathy, resulting in a carry-over effect when the next clip was played. While we did have participants complete a questionnaire to evaluate their general capacity for empathy (see Neumann, Zupan, Malec and Hammond47) We did not specifically ask participants to indicate whether or not they emphasized with the characters, and if so, to what degree. These questions may provide greater insight into the why our results indicated participants with TBI were more likely to provide negative responses to neutral stimuli.

The current study used a forced-choice response format, a commonly used paradigm in emotion recognition studies. IT is possible that the limited options constrained participants to select a single emotion when they perceived the presence of multiple emotions48. Additionally, they may have selected an emotion they did not perceive rather than choose the alternative response (i.e., I don't know). Using a free-choice response format with people with severe traumatic brain injury could result in additional and/or more problematic issues (e.g., word generation difficulties; long streams of explanations versus a single word). Therefore, it might be best to first adopt a forced-choice format that allows an additional free-choice word or comment to be added before moving onto the next item.

The use of film clips in the current study was a novel approach to assessing emotion recognition by people with TBI. It was hypothesized that people with TBI would find the multimodal nature of the film clips more complex, and thus more challenging than isolated facial and vocal cues. However, results suggested that rather than increase processing demands, the presence of numerous affective cues, including contextual information, facilitated processing. Yet, in everyday situations where a multitude of cues are presumably present, emotion recognition is a significant issue for people with TBI. Thus, while the film clips are more ecologically valid than isolated emotion expressions, they are not fully representative of the cues available in everyday social interactions. It could be that acting as an observer versus a participant allows for better integration of the available emotion cues.

Disclosures

The authors have nothing to disclose.

Acknowledgements

This work was supported by the Humanities Research Institute at Brock University in St. Catharines, Ontario, Canada and by the Cannon Research Center at Carolinas Rehabilitation in Charlotte, North Carolina, USA.

Materials

| Diagnostic Analysis of Nonverbal Accuracy-2 | Department of Pychology, Emory University. Atlanta, GA | DANVA-Faces subtest, DANVA-Voices subtest | |

| Computer | Apple iMac Desktop, 27" display | ||

| Statistical Analysis Software | SPSS | University Licensed software for data analysis | |

| Happy Film Clip 1 | Sweet Home Alabama, D&D Films, 2002, Director: A Tennant | A man surprises his girlfriend by proposing in a jewelry store | |

| Happy Film Clip 2 | Wedding Crashers, New Line Cinema, 2005, Director: D. Dobkin | A couple is sitting on the beach and flirting while playing a hand game | |

| Happy Film Clip 3 | Reme-mber the Titans, Jerry Bruckheimer Films, 2000, Director: B. Yakin | An African American football coach and his family are accepted by their community when the school team wins the football championship | |

| Sad Film Clip 1 | Grey's Anatomy, ABC, 2006, Director: P. Horton | A father is only able to communicate with his family using his eyes. | |

| Sad Film Clip 2 | Armageddon, Touchstone Pictures, 1998, Director: M. Bay | A daughter is saying goodbye to her father who is in space on a dangerous mission | |

| Sad Film Clip 3 | Grey's Anatomy, ABC, 2006, Director: M. Tinker | A woman is heartbroken her fiance has died | |

| Angry Film Clip 1 | Anne of Green Gables, Canadian Broadcast Corporation, 1985, Director: K. Sullivan | An older woman speaks openly about a child's physical appearance in front of her | |

| Angry Film Clip 2 | Enough, Columbia Pictures, 2002, Director: M. Apted | A wife confronts her husband about an affair when she smells another woman's perfume on his clothing | |

| Angry Film Clip 3 | Pretty Woman, Touchstone Pictures, 1990, Director: G. Marshall | A call girl attempts to purchase clothing in an expensive boutique and is turned away | |

| Fearful Film Clip 1 | Blood Diamond, Warner Bros Pictures, 2006, Director: E. Zwick | Numerous vehicles carrying militia approach a man and his son while they are out walking | |

| Fearful Film Clip 2 | The Life Before Her Eyes, 2929 Productions, 2007, Directors: V. Perelman | Two teenaged gilrs are talking in the school bathroom when they hear gunshots that continue to get closer | |

| Fearful Film Clip 3 | The Sixth Sense, Barry Mendel Productions, 1999, Director: M. N. Shyamalan | A young boy enters the kitchen in the middle of the night and finds his mother behaving very atypically | |

| Neutral Film Clip 1 | The Other Sister, Mandeville Films, 1999, Director: G. Marshall | A mother and daughter are discussing an art book while sitting in their living room | |

| Neutral Film Clip 2 | The Game, Polygram Filmed Entertainment, 1999, Director: D. Fincher | One gentleman is explaining the rules of a game to another | |

| Neutral Film Clip 3 | The Notebook, New Line Cinema, 2004, Director: N. Cassavetes | An older gentleman and his doctor are conversing during a routine check-up |

References

- Langlois, J., Rutland-Brown, W., Wald, M. The epidemiology and impact of traumatic brain injury: A brief overview. J. Head Trauma Rehabil. 21 (5), 375-378 (2006).

- Babbage, D. R., Yim, J., Zupan, B., Neumann, D., Tomita, M. R., Willer, B. Meta-analysis of facial affect recognition difficulties after traumatic brain injury. Neuropsychology. 25 (3), 277-285 (2011).

- Neumann, D., Zupan, B., Tomita, M., Willer, B. Training emotional processing in persons with brain injury. J. Head Trauma Rehabil. 24, 313-323 (2009).

- Bornhofen, C., McDonald, S. Treating deficits in emotion perception following traumatic brain injury. Neuropsychol Rehabil. 18 (1), 22-44 (2008).

- Zupan, B., Neumann, D., Babbage, D. R., Willer, B. The importance of vocal affect to bimodal processing of emotion: implications for individuals with traumatic brain injury. J Commun Disord. 42 (1), 1-17 (2009).

- Bibby, H., McDonald, S. Theory of mind after traumatic brain injury. Neuropsychologia. 43 (1), 99-114 (2005).

- Ferstl, E. C., Rinck, M., von Cramon, D. Y. Emotional and temporal aspects of situation model processing during text comprehension: an event-related fMRI study. J. Cogn. Neurosci. 17 (5), 724-739 (2005).

- Milders, M., Fuchs, S., Crawford, J. R. Neuropsychological impairments and changes in emotional and social behaviour following severe traumatic brain injury. J. Clin. Exp. Neuropsychol. 25 (2), 157-172 (2003).

- Spikman, J., Milders, M., Visser-Keizer, A., Westerhof-Evers, H., Herben-Dekker, M., van der Naalt, J. Deficits in facial emotion recognition indicate behavioral changes and impaired self-awareness after moderate to severe tramatic brain injury. PloS One. 8 (6), e65581 (2013).

- Zupan, B., Neumann, D. Affect Recognition in Traumatic Brain Injury: Responses to Unimodal and Multimodal Media. J. Head Trauma Rehabil. 29 (4), E1-E12 (2013).

- Radice-Neumann, D., Zupan, B., Babbage, D. R., Willer, B. Overview of impaired facial affect recognition in persons with traumatic brain injury. Brain Inj. 21 (8), 807-816 (2007).

- McDonald, S. Are You Crying or Laughing? Emotion Recognition Deficits After Severe Traumatic Brain Injury. Brain Impair. 6 (01), 56-67 (2005).

- Cunningham, D. W., Wallraven, C. Dynamic information for the recognition of conversational expressions. J. Vis. 9 (13), 1-17 (2009).

- Elfenbein, H. A., Marsh, A. A., Ambady, W. I. N. Emotional Intelligence and the Recognition of Emotion from Facid Expressions. The Wisdom in Feeling: Psychological Processes in Emotional Intelligence. , 37-59 (2002).

- McDonald, S., Saunders, J. C. Differential impairment in recognition of emotion across different media in people with severe traumatic brain injury. J. Int Neuropsycho. Soc. : JINS. 11 (4), 392-399 (2005).

- Williams, C., Wood, R. L. Impairment in the recognition of emotion across different media following traumatic brain injury. J. Clin. Exp. Neuropsychol. 32 (2), 113-122 (2010).

- Adolphs, R., Tranel, D., Damasio, A. R. Dissociable neural systems for recognizing emotions. Brain Cogn. 52 (1), 61-69 (2003).

- Biele, C., Grabowska, A. Sex differences in perception of emotion intensity in dynamic and static facial expressions. Exp. Brain Res. 171, 1-6 (2006).

- Collignon, O., Girard, S., et al. Audio-visual integration of emotion expression. Brain Res. 1242, 126-135 (2008).

- LaBar, K. S., Crupain, M. J., Voyvodic, J. T., McCarthy, G. Dynamic perception of facial affect and identity in the human brain. Cereb. Cortex. 13 (10), 1023-1033 (2003).

- Mayes, A. K., Pipingas, A., Silberstein, R. B., Johnston, P. Steady state visually evoked potential correlates of static and dynamic emotional face processing. Brain Topogr. 22 (3), 145-157 (2009).

- Sato, W., Yoshikawa, S. Enhanced Experience of Emotional Arousal in Response to Dynamic Facial Expressions. J. Nonverbal Behav. 31 (2), 119-135 (2007).

- Schulz, J., Pilz, K. S. Natural facial motion enhances cortical responses to faces. Exp. Brain Res. 194 (3), 465-475 (2009).

- O’Toole, A., Roark, D., Abdi, H. Recognizing moving faces: A psychological and neural synthesis. Trends Cogn. Sci. 6 (6), 261-266 (2002).

- Dimoska, A., McDonald, S., Pell, M. C., Tate, R. L., James, C. M. Recognizing vocal expressions of emotion in patients with social skills deficits following traumatic brain injury. J. Int. Neuropsyco. Soc.: JINS. 16 (2), 369-382 (2010).

- McDonald, S., Flanagan, S., Rollins, J., Kinch, J. TASIT: A new clinical tool for assessing social perception after traumatic brain injury. J. Head Trauma Rehab. 18 (3), 219-238 (2003).

- Channon, S., Pellijeff, A., Rule, A. Social cognition after head injury: sarcasm and theory of mind. Brain Lang. 93 (2), 123-134 (2005).

- Henry, J. D., Phillips, L. H., Crawford, J. R., Theodorou, G., Summers, F. Cognitive and psychosocial correlates of alexithymia following traumatic brain injury. Neuropsychologia. 44 (1), 62-72 (2006).

- Martìn-Rodrìguez, J. F., Leòn-Carriòn, J. Theory of mind deficits in patients with acquired brain injury: a quantitative review. Neuropsychologia. 48 (5), 1181-1191 (2010).

- McDonald, S., Flanagan, S. Social perception deficits after traumatic brain injury: interaction between emotion recognition, mentalizing ability, and social communication. Neuropsychology. 18 (3), 572-579 (2004).

- Milders, M., Ietswaart, M., Crawford, J. R., Currie, D. Impairments in theory of mind shortly after traumatic brain injury and at 1-year follow-up. Neuropsychology. 20 (4), 400-408 (2006).

- Nowicki, S. . The Manual for the Receptive Tests of the Diagnostic Analysis of Nonverbal Accuracy 2 (DANVA2). , (2008).

- Gross, J. J., Levenson, R. W. Emotion Elicitation Using Films. Cogn. Emot. 9 (1), 87-108 (1995).

- Spell, L. A., Frank, E. Recognition of Nonverbal Communication of Affect Following Traumatic Brain Injury. J. Nonverbal Behav. 24 (4), 285-300 (2000).

- Edgar, C., McRorie, M., Sneddon, I. Emotional intelligence, personality and the decoding of non-verbal expressions of emotion. Pers. Ind. Dif. 52, 295-300 (2012).

- Nowicki, S., Mitchell, J. Accuracy in Identifying Affect in Child and Adult Faces and Voices and Social Competence in Preschool Children. Genet. Soc. Gen. Psychol. 124 (1), (1998).

- Astesano, C., Besson, M., Alter, K. Brain potentials during semantic and prosodic processing in French. Cogn. Brain Res. 18, 172-184 (2004).

- Kotz, S. A., Paulmann, S. When emotional prosody and semantics dance cheek to cheek: ERP evidence. Brain Res. 115, 107-118 (2007).

- Paulmann, S., Pell, M. D., Kotz, S. How aging affects the recognition of emotional speech. Brain Lang. 104 (3), 262-269 (2008).

- Pell, M. D., Jaywant, A., Monetta, L., Kotz, S. A. Emotional speech processing: disentangling the effects of prosody and semantic cues. Cogn. Emot. 25 (5), 834-853 (2011).

- Bänziger, T., Scherer, K. R. Using Actor Portrayals to Systematically Study Multimodal Emotion Expression: The GEMEP Corpus. Affective Computing and Intelligent Interaction, LNCS 4738. , 476-487 (2007).

- Busselle, R., Bilandzic, H. Fictionality and Perceived Realism in Experiencing Stories: A Model of Narrative Comprehension and Engagement. Commun. Theory. 18 (2), 255-280 (2008).

- Zagalo, N., Barker, A., Branco, V. Story reaction structures to emotion detection. Proceedings of the 1st ACM Work. Story Represent. Mech. Context – SRMC. 04, 33-38 (2004).

- Hagemann, D., Naumann, E., Maier, S., Becker, G., Lurken, A., Bartussek, D. The Assessment of Affective Reactivity Using Films: Validity, Reliability and Sex Differences. Pers. Ind. Dif. 26, 627-639 (1999).

- Hewig, J., Hagemann, D., Seifert, J., Gollwitzer, M., Naumann, E., Bartussek, D. Brief Report. A revised film set for the induction of basic emotions. Cogn. Emot. 19 (7), 1095-1109 (2005).

- Wranik, T., Scherer, K. R. Why Do I Get Angry? A Componential Appraisal Approach. International Handbook of Anger. , 243-266 (2010).

- Neumann, D., Zupan, B., Malec, J. F., Hammond, F. M. Relationships between alexithymia, affect recognition, and empathy after traumatic brain injury. J. Head Trauma Rehabil. 29 (1), E18-E27 (2013).

- Russell, J. A. Is there universal recognition of emotion from facial expression? A review of the cross-cultural studies. Psychol. Bull. 115 (1), 102-141 (1994).